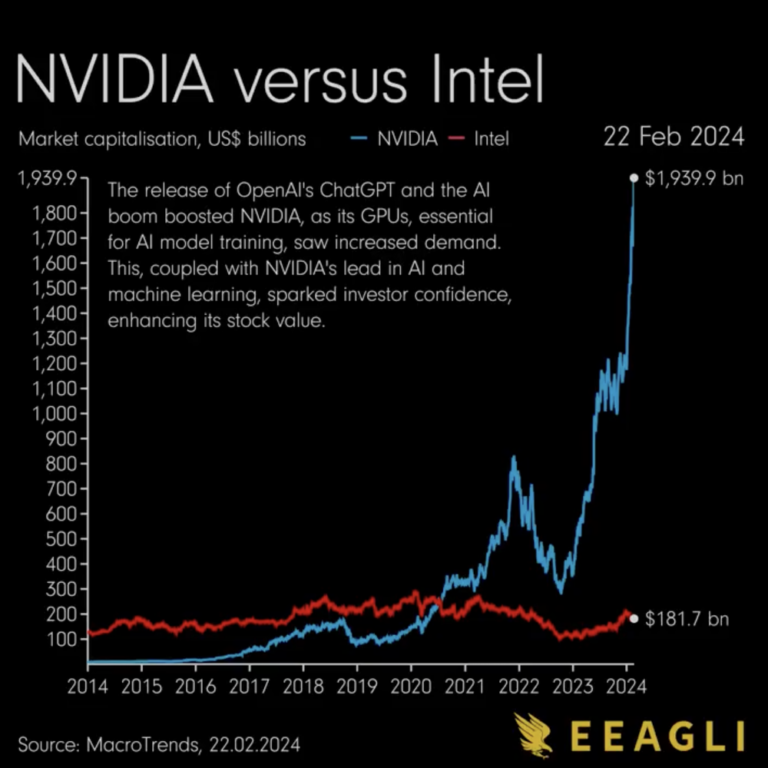

Nvidia, the market dominator in the world of computer chips, has surpassed Alphabet and Amazon in market capitalization earlier this February, and now stands at $1.98 trillion in value. The chipmaker is now world’s fourth biggest company by market cap only behind Microsoft, Apple, and Saudi Aramco. Nvidia has seen an increase in stock prices in the last 18 months due to artificial intelligence (AI) boom, but the recent record-breaking stock prices reveal how cut-throat the AI chip race is. Companies like Amazon, Microsoft, and Meta have been rushing to purchase Nvidia’s new H100 chip, which helps them power AI projects. So, how did Nvidia end up dominating the chip market and became the third-most valuable company in the world?

Founded in 1993, Nvidia was initially known for designing cutting-edge chips for gaming and graphics. Gradually, the company expanded its horizons, venturing into diverse fields in. Realizing the importance of AI as early as in 2012, the chip giant started developing chips specialized for deep learning and AI computing. The company not only developed the hardware, but have also focused on building software, systems, and services.

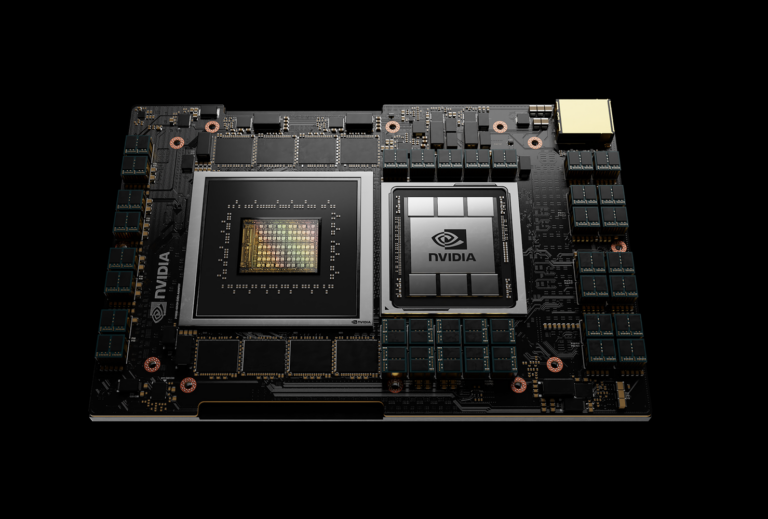

One of Nvidia’s key strength lies in its graphics processing units (GPUs), and it is wholly different from central processing units (CPUs) which is the most standard computer chip. CPUs handle complicated computer operations rapidly in a sequential order. On the other hand, GPUs can process many tasks at once, making them excel in parallel processing applications such as rendering graphics. It appears that Nvidia’s H100 GPUs is a great fit for AI developers needing to train neural networks and LLMs (large language models). These chips offer the speed, efficiency, and the capability to manage intricate and data-intensive AI operations.

Nvidia’s innovative hardware plays a crucial role in accelerating AI computations. In fact, the demand for these chips is so high that some companies have decided to stockpile hundreds of thousands of chips. In January, Meta boss Mark Zuckerberg have ordered some 340,000 Nvidia H100 GPUs. Although Nvidia did not specify the chips price, it is estimated to be around $30,000 a piece, meaning Meta should have paid around $10.5 billion.

Once the industry leader, Intel was ahead of Nvidia for a long time, however the company fell behind in the AI chip race. Historically, Intel has largely focused on CPUs to generate its revenue, which proved to be a successful strategy up until early 2010s. During that time Intel’s main market, which was supplying CPUs for basic consumers had reached its limit. Unlike tech enthusiasts and gamers, most individuals didn’t have an interest in upgrading their computers to have the newest chips. Coincidentally, in the same period of Intel’s stagnation, a new way of computing had emerged. Students at Stanford who were researching about AI realized that using GPUs instead of CPUs was accelerating the AI training process. This breakthrough put another challenge on Intel’s plate as their GPU technologies were behind industry standards. Although they have recently started developing their AI chips and data centers, this lagging behind in the AI race has costed Intel a lot. In the 2023 third quarter, Intel’s data center revenue was reportedly $3.8 billion which fell from $4.4 billion in the same quarter in 2022. On the other hand, Nvidia’s 2023 third quarter revenue was $14.3 billion, a massive increase from $3.8 billion in 2022 third quarter.

While Nvidia currently holds a monopoly in the AI chip market, its dominance is not guaranteed, and there are potential challenges that could threaten its position. For one, The U.S. CHIPS and Science Act have had notable implications for Nvidia, particularly in terms of market access and supply chain dynamics. Nvidia faces challenges in accessing the Chinese market, which is a significant consumer of semiconductor chips and a key battleground for technological supremacy. As a result, the Nvidia shaped gap in the Chinese chip market is being filled by Huawei. Just recently, Nvidia has officially acknowledged Huawei as their top rival in several areas including the AI chips. Secondly, the tensions between U.S. and China over Taiwan have sparked concern about the global semiconductor supply chain. Taiwan Semiconductor Manufacturing Co (TSMC) is Nvidia’s (and the world’s biggest) chip manufacturer has been affected by the CHIPS and Science Act as well. Although TSMC is Nvidia’s partner and their advanced semiconductor chips, the company’s operations are still subject to U.S. regulations. Moreover, the tensions between China and U.S. adds more uncertainty to TSMC’s operations. The chip manufacturer needs to carefully manage its relationships with both countries, avoiding any geopolitical disputes, which may in turn affect TSMC’s operations.

Mainland Chinese chip manufacturers have also faced challenges because of the CHIPS Act. The U.S. government-imposed and Dutch firm ASML backed export restrictions have forced companies like SMIC (Semiconductor Manufacturing International Corporation) to pursue chip building self-sufficiency. To reach this independency, in 2022 the Chinese government handed out $1.75 billion in subsidies, where SMIC received the largest sum of $270 million. Although the subsidy helped SMIC continue producing chips, without the key equipment from ASML, SMIC is nowhere near industry standards. Companies like Apple and Samsung use 3-nanometer chips in their phones while SMIC is at the 5-nanometer mark. With a market cap of $25.9 billion, SMIC is now mass-producing these chips to supply Huawei.

As the competition grows fiercer every day, the question of whether Intel or SMIC can catch up to Nvidia remains a subject of ongoing debate.